Overview of QIIME 2 Plugin Workflows¶

Note

Abandon all hope, ye who enter, if you have not read the glossary. 👺

Note

This is a guide for novice QIIME 2 users, and particularly for those who are new to microbiome research. For experienced users who are already well versed in microbiome analysis, and those who are averse to uncontrolled use of emoji, mosey on over to the overview tutorial for experienced users.

Welcome all newcomers 👋. This guide will give you an overview of many of the main plugins and actions available in QIIME 2, and guide you to the relevant tutorials for deeper exploration. In other words, it might not answer the question, “how do I use QIIME 2”, but it will point you in the right direction. Consider this your treasure map: QIIME 2 actions are the stepping stones on your path to glory, and the flowcharts below will tell you where all the goodies are buried. 🗺️

Remember, many paths lead from the foot of the mountain, but at the peak we all gaze at the same moon. 🌕

Let’s get oriented: flowcharts¶

Before we begin talking about specific plugins and actions, we will discuss a conceptual overview of a standard QIIME 2 Amplicon Distribution workflow for analyzing amplicon sequence data. And before we look at that overview, we must look at the key to our treasure map:

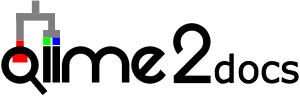

Each type of data (i.e., Artifacts and Visualizations) and action (i.e., methods, visualizers, and pipelines) is represented by a different color-coded node. The edges connecting each node are either solid (representing either required input or output) or dashed (representing optional input). Don’t know what these terms mean? Arrgh! Read the note at the top of this page.

In the flowcharts below:

Actions are labeled with the name of the plugin and the name of the action. To use that action, type “qiime” and then the text contained in that node. E.g.,

qiime demux emp-single.Note that pipelines are a special type of action that run multiple actions in a single command. Neat! In (some of) the flowcharts, the pipelines are displayed as boxes encompassing the actions that are run inside. 🌯

Artifacts are labeled with the semantic type of that file. Don’t worry - you will not need to type out those long names unless you need to import a file into that format.

Visualizations are variously labeled as “visualization,” some name that represents the information shown in that visualization, or replaced with an image representing some of the tasty information you might find inside that visualization… 🍙

Useful points for beginners¶

Just a few more important points before we go further:

The guide below is not exhaustive by any means. It only covers some of the chief actions in most of the “core” QIIME 2 plugins. There are many more actions and plugins to discover. Curious to learn more? See all installed plugins by typing

qiime --helpinto your terminal (and hit “enter”). See all actions in thedemuxplugin by typingqiime demux --help. Learn about theemp-singlemethod indemuxby typingqiime demux emp-single --help. Now you know how to access the help documentation for all other plugins and actions. 😊The flowcharts below are designed to be as simple as possible, and hence omit many of the inputs (particularly optional inputs and metadata) and outputs (particularly statistical summaries and other minor outputs) and all of the possible parameters from most actions. Many additional actions (e.g., for displaying statistical summaries or fiddling with feature tables 🎻) are also omitted. Now that you know all about the help documentation, use it to learn more about individual actions, and other actions present in a plugin (hint: if a plugin has additional actions not described here, they are probably used to examine the output of other actions in that plugin).

Metadata is a central concept in QIIME 2. We do not extensively discuss metadata in this guide, because working with metadata is already thoroughly explained here. Read well and go far. 📚

Artifacts (

.qza) and visualizations (.qzv) files are really just zipped archives containing one or more data files and accessory files containing provenance information. You can justunzipan artifact/visualization at any time to peek inside, but the better way to do this is to useqiime tools exportas detailed in our exporting tutorials. Give it a spin and see if you can figure out what types of file formats are stored inside different types of artifacts! If you really want to read more about the structure of artifact/visualization files, read on. 🤓There is no one way to do things in QIIME 2. Nor is there a “QIIME 2” approach. Most of the plugins and actions in QIIME 2 are independent software or pre-existing methods. QIIME is the glue that makes the magic happen. Many paths lead from the foot of the mountain… ⛰️

Do not forget to cite appropriately! Unsure what to cite? To see the citations for a specific action or plugin, type in the help commands that you learned above, but replace

--helpwith--citations. Even better: go to https://view.qiime2.org/ and drag and drop any QIIME 2 artifact or visualization into the window. Provided that file was generated in QIIME 2018.4+, the “citations” tab should contain information on all relevant citations used for the generation of that file. Groovy. 😎

💃💃💃

Conceptual overview of QIIME 2¶

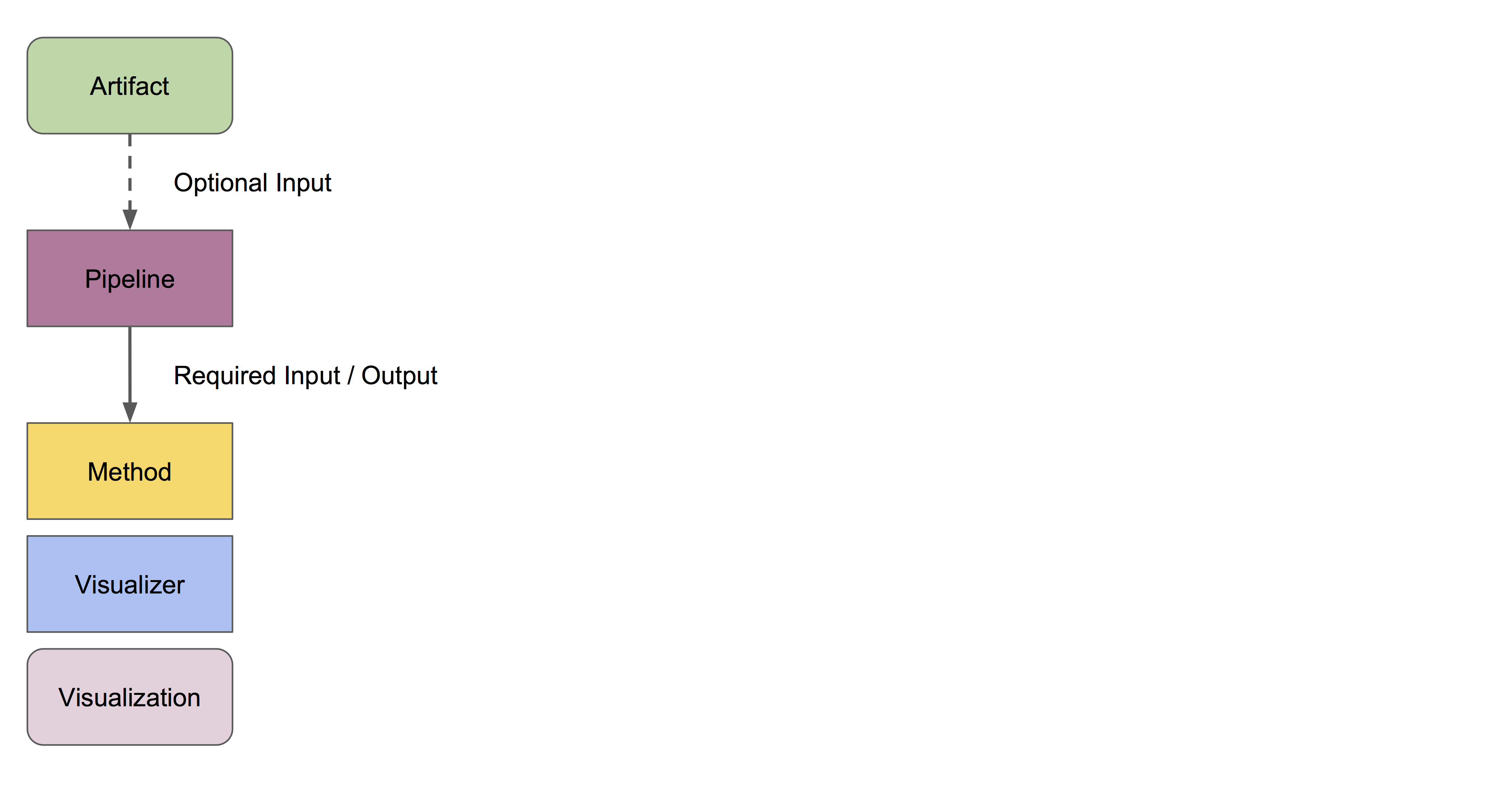

Now that we have read the glossary and key, let us examine a conceptual overview of the various possible workflows for examining amplicon sequence data:

The edges and nodes in this overview do not represent specific actions or data types, but instead represent conceptual categories, e.g., the basic types of data or analytical goals we might have in an experiment. All of these steps and terms are discussed in more detail below.

All data must be imported as a QIIME 2 artifact to be used by a QIIME 2 action (with the exception of some metadata). Different users may enter this workflow at different stages. Most will have some type of raw sequence (e.g., FASTQ or FASTA) data, which should be imported following the appropriate sequence importing scheme. Other users may start with demultiplexed sequence data, or even a feature table given to them by a collaborator. The importing tutorial covers the most common data types that users need to import into QIIME 2.

Now that we understand that we can actually enter into this overview workflow at nearly any of the nodes, let us walk through individual sections.

All amplicon/metagenome sequencing experiments begin, at some point or another, as raw sequence data. This is probably FASTQ data, containing DNA sequences and quality scores for each base.

We must demultiplex these reads to determine which sample each read came from.

- Reads should then be denoised into amplicon sequence variants (ASVs) or clustered into operational taxonomic units (OTUs) to achieve two goals:

reducing sequence errors

dereplicating sequences

The resulting feature table and representative sequences are key pieces of data. Don’t lose them! A feature table is essentially a matrix of samples x observations, i.e., the number of times each “feature” (OTUs, ASVs, etc) is observed in each sample in a data set.

- We can do many things with this feature table. Common analyses include:

Taxonomic classification of sequences (a.k.a., “what species are present?”)

Alpha and beta diversity analyses, or measures of diversity within and between samples, respectively (a.k.a., “how similar are my samples?”)

Many diversity analyses rely on the phylogenetic similarity between individual features. If you are sequencing phylogenetic markers (e.g., 16S rRNA genes), you can align these sequences to assess the phylogenetic relationship between each of your features.

Differential abundance measurements determine which features (OTUs, ASVs, taxa, etc) are significantly more/less abundant in different experimental groups.

This is just the beginning, and many other statistical tests and plotting methods are at your finger tips (QIIME 2) and in the lands beyond. The world is your oyster. Let’s dive in. 🏊

Warning

Whoa! Hold yer horses there hoss! 🏇 We are going to start using some seriously technical language in the following sections 🤓. Did you read up on your semantic types and core concepts? Do so now or proceed at your own risk. ⚡⚡⚡

Demultiplexing¶

Okay! Imagine we have just received some FASTQ data, hot off the sequencing instrument. Most next-gen sequencing instruments have the capacity to analyze hundreds or even thousands of samples in a single lane/run; we do so by multiplexing these samples, which is just a fancy word for mixing a whole bunch of stuff together. How do we know which sample each read came from? This is typically done by appending a unique barcode (a.k.a. index or tag) sequence to one or both ends of each sequence. Detecting these barcode sequences and mapping them back to the samples they belong to allows us to demultiplex our sequences.

Want to get started demultiplexing? You (or whoever prepared and sequenced your samples) should know which barcode belongs to each sample — if you do not know, talk to your lab mates or sequencing center. Include this barcode information in your sample metadata file.

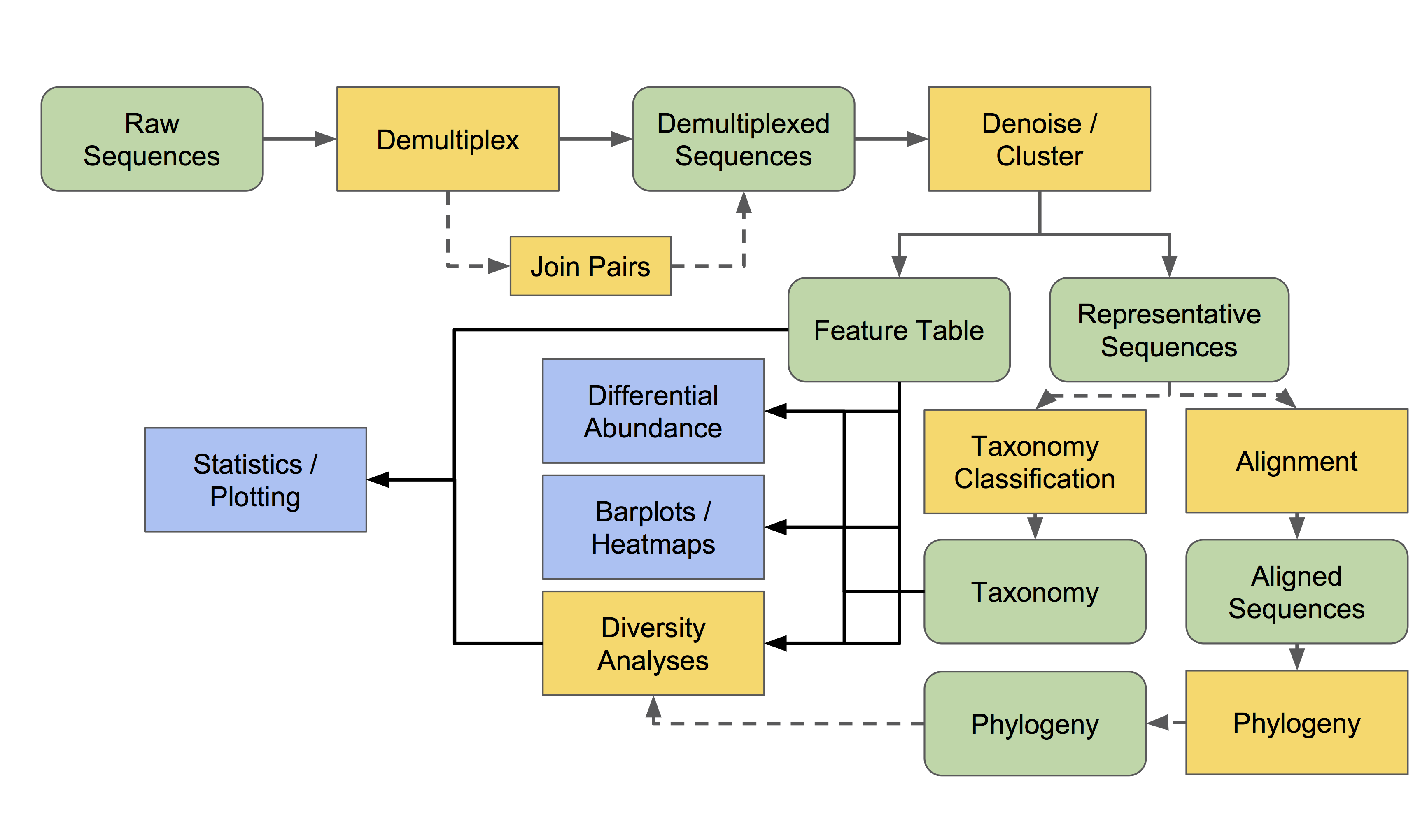

The process of demultiplexing (as it occurs in QIIME 2) will look something like the following workflow (ignore the right-hand side of this flow chart for now):

This flowchart describes all demultiplexing steps that are currently possible in QIIME 2, depending on the type of raw data you have imported. Usually only one of the different demultiplexing actions available in q2-demux or q2-cutadapt will be applicable for your data, and that is all you will need.

Read more about demultiplexing and give it a spin with the moving pictures tutorial (for single-end data) and Atacama soils tutorial (for paired-end data). Those tutorials cover EMP format data (as described in the importing docs). Have barcodes and primers in-line in your reads? See the cutadapt tutorials for using the demux methods in q2-cutadapt. Have dual-indexed reads or mixed-orientation reads or some other unusual format? Pray hard 🙏. Then check out the QIIME 2 forum to see if someone has found a workaround. 😉

Paired-end reads need to be joined at some point in the analysis. If you followed the Atacama soils tutorial, you will see that this happens automatically during denoising with q2-dada2. However, if you want to use q2-deblur or an OTU clustering method (as described in more detail below), use q2-vsearch to join these reads before proceeding, as shown in the demultiplexing workflow. To learn more about read joining, see the read joining tutorial.👯

If you are beginning to pull your hair and foam at the mouth, do not despair: QIIME 2 tends to get easier the further we travel in the “general overview”. Importing and demultiplexing raw sequencing data happens to be the most frustrating part for most new users 😤. But once you get the hang of it, it’s a piece of cake. 🍰

Denoising and clustering¶

Congratulations on getting this far! Denoising and clustering steps are slightly less confusing than importing and demultiplexing! 🎉😬🎉

The names for these steps are very descriptive:

We denoise our sequences to remove and/or correct noisy reads. 🔊

We dereplicate our sequences to reduce repetition and file size/memory requirements in downstream steps (don’t worry! we keep count of each replicate). 🕵️

We cluster sequences to collapse similar sequences (e.g., those that are ≥ 97% similar to each other) into single replicate sequences. This process, also known as OTU picking, was once a common procedure, used to simultaneously dereplicate but also perform a sort of quick-and-dirty denoising procedure (to capture stochastic sequencing and PCR errors, which should be rare and similar to more abundant centroid sequences). Use denoising methods instead if you can. Times have changed. Welcome to the future. 😎

Denoising¶

Let’s start with denoising, which is depicted on the right-hand side of the demultiplexing and denoising workflow.

The denoising methods currently available in QIIME 2 include DADA2 and Deblur. You can learn more about those methods by reading the original publications for each. Examples of DADA2 exist in the moving pictures tutorial and Fecal Microbiome Transplant study tutorial (for single-end data) and Atacama soils tutorial (for paired-end data). Examples of Deblur exist in the moving pictures tutorial (for single-end data) and read joining tutorial (for paired-end data). Note that deblur (and also vsearch dereplicate-sequences) should be preceded by basic quality-score-based filtering, but this is unnecessary for dada2. Both Deblur and DADA2 contain internal chimera checking methods and abundance filtering, so additional filtering should not be necessary following these methods. 🦁🐐🐍

To put it simply, these methods filter out noisy sequences, correct errors in marginal sequences (in the case of DADA2), remove chimeric sequences, remove singletons, join denoised paired-end reads (in the case of DADA2), and then dereplicate those sequences. 😎

The features produced by denoising methods go by many names, usually some variant of “sequence variant” (SV), “amplicon SV” (ASV), “actual SV”, “exact SV”… I believe we already referred to these as ASVs in this tutorial, so let’s keep our nomenclature consistent. 📏

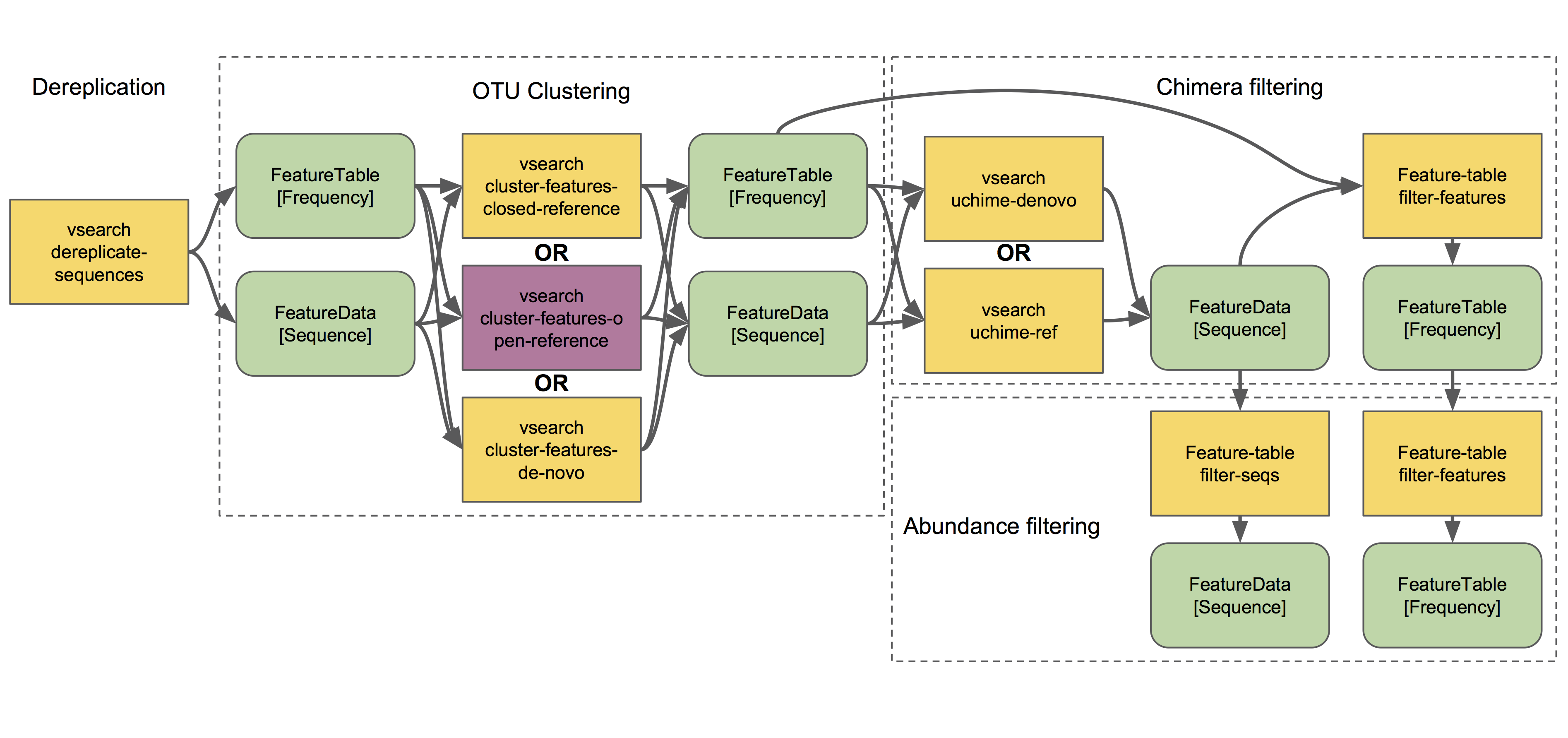

Clustering¶

Next we will discuss clustering methods. Dereplication (the simplest clustering method, effectively producing 100% OTUs, i.e., all unique sequences observed in the dataset) is also depicted in the demultiplexing and denoising workflow, and is the necessary starting point to all other clustering methods in QIIME 2, as shown here:

q2-vsearch implements three different OTU clustering strategies: de novo, closed reference, and open reference. All should be preceded by basic quality-score-based filtering and followed by chimera filtering and aggressive OTU filtering (the treacherous trio, a.k.a. the Bokulich method). 🙈🙉🙊

The OTU clustering tutorial demonstrates use of several q2-vsearch clustering methods. Don’t forget to read the chimera filtering tutorial!

The features produced by clustering methods are known as operational taxonomic units (OTUs), which is Esperanto for suboptimal, imprecise rubbish. 🚮

The Feature Table¶

The final products of all denoising and clustering methods/workflows are a FeatureTable[Frequency] (feature table) artifact and a FeatureData[Sequence] (representative sequences) artifact. These are two of the most important artifacts in an amplicon sequencing workflow, and are used for many downstream analyses, as discussed below. Indeed, feature tables are crucial to any QIIME 2 analysis, as the central record of all observations per sample. Such an important artifact deserves its own powerful plugin, q2-feature-table. We will not discuss all actions of this plugin in detail here (some are mentioned below), but it performs many useful operations on feature tables so familiarize yourself with its documentation! 😴

I repeat: feature tables are central to analysis in QIIME 2. Almost all analysis steps (i.e., following demultiplexing and denoising/clustering) involve feature tables in some way. Pay attention! 😳

Note

Want to see which sequences are associated with each feature ID? Use qiime metadata tabulate with your FeatureData[Sequence] artifact as input.

Congratulations! 🎉 You’ve made it past importing, demultiplexing, and denoising/clustering your data, which are the most complicated and difficult steps for most users (if only because there are so many ways to do it!). If you’ve made it this far, the rest should be easy peasy. Now begins the fun. 🍾

Taxonomy classification and taxonomic analyses¶

For many experiments, investigators aim to identify the organisms that are present in a sample. E.g., what genera or species are present in my samples? Are there any human pathogens in this patient’s sample? What’s swimming in my wine? 🍷🤑

We can do this by comparing our query sequences (i.e., our features, be they ASVs or OTUs) to a reference database of sequences with known taxonomic composition. Simply finding the closest alignment is not really good enough — because other sequences that are equally close matches or nearly as close may have different taxonomic annotations. So we use taxonomy classifiers to determine the closest taxonomic affiliation with some degree of confidence or consensus (which may not be a species name if one cannot be predicted with certainty!), based on alignment, k-mer frequencies, etc. Those interested in learning more about taxonomy classification in QIIME 2 can read until the cows come home. 🐄🐄🐄

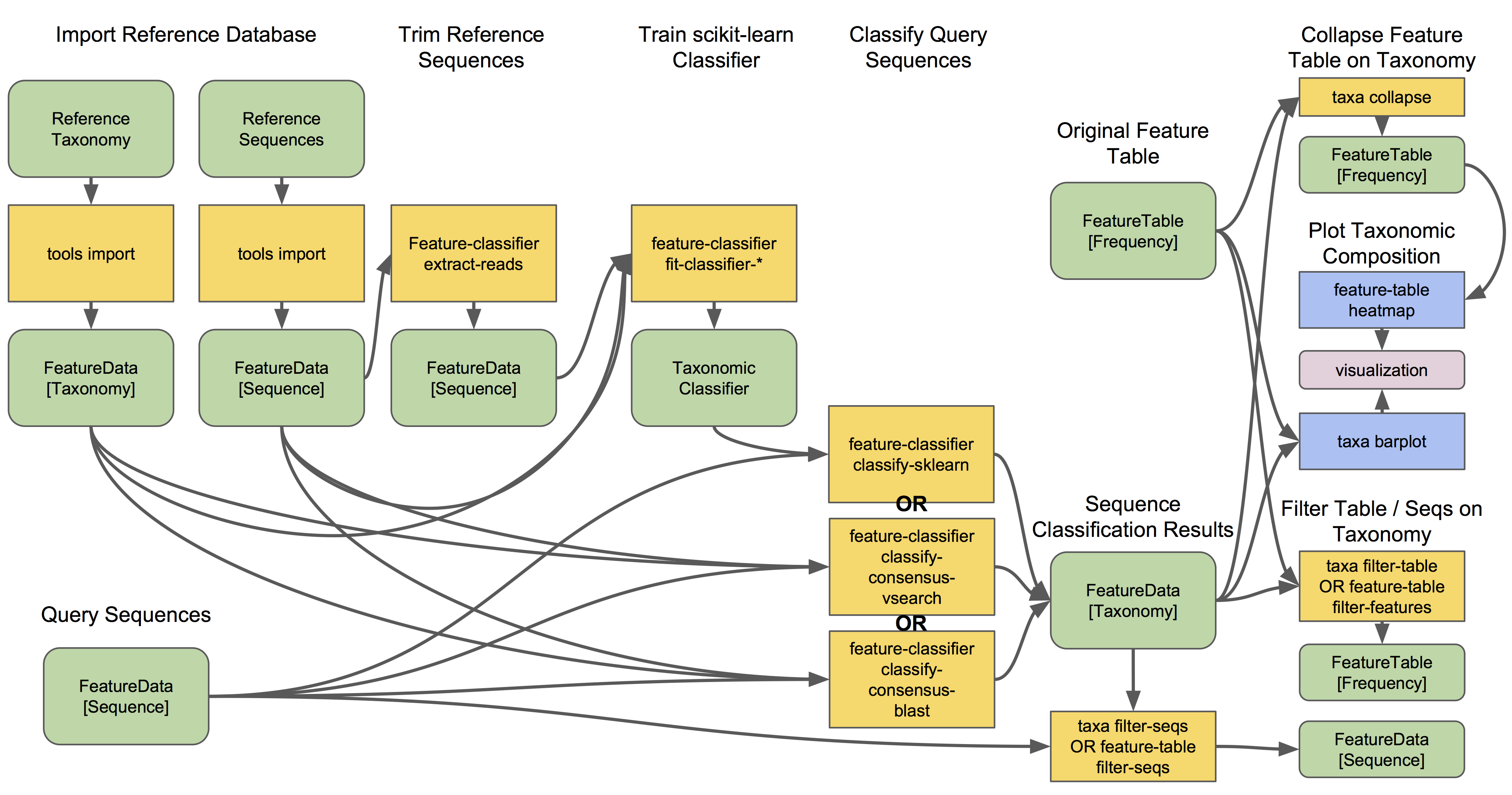

Let’s see what a taxonomy classification workflow might look like:

q2-feature-classifier contains three different classification methods. classify-consensus-blast and classify-consensus-vsearch are both alignment-based methods that find a consensus assignment across N top hits. These methods take reference database FeatureData[Taxonomy] and FeatureData[Sequence] files directly, and do not need to be pre-trained.

Machine-learning-based classification methods are available through classify-sklearn, and theoretically can apply any of the classification methods available in scikit-learn. These classifiers must be trained, e.g., to learn which features best distinguish each taxonomic group, adding an additional step to the classification process. Classifier training is reference database- and marker-gene-specific and only needs to happen once per marker-gene/reference database combination; that classifier may then be re-used as many times as you like without needing to re-train!

Most users do not even need to follow that tutorial and perform that training step, because the lovely QIIME 2 developers provide several pre-trained classifiers for public use. 🎅🎁🎅🎁🎅🎁

Which method is best? They are all pretty good, otherwise we wouldn’t bother exposing them here. 😎 But in general classify-sklearn with a Naive Bayes classifier can slightly outperform other methods we’ve tested based on several criteria for classification of 16S rRNA gene and fungal ITS sequences. It can be more difficult and frustrating for some users, however, since it requires that additional training step. That training step can be memory intensive, becoming a barrier for some users who are unable to use the pre-trained classifiers. Some users also prefer the alignment-based methods because their mode of operation is much more transparent and their parameters easier to manipulate (see the link above for description of these parameters and recommended settings for different applications).

Feature classification can be slow. It all depends on the number of sequences you have, and the number of reference sequences. OTU clustered sequences will take longer to classify (because often there are more). Filter low-abundance features out of your sequences file before classifying, and use smaller reference databases if possible if you have concerns about runtime. In practice, in “normal size” sequencing experiments (whatever that means 😜) we see variations between a few minutes (a few hundred features) to many hours (hundreds of thousands of features) for classification to complete. If you want to hang some numbers on there, check out our benchmarks for classifier runtime performance. 🏃⏱️

Feature classification can be memory intensive. We usually see minimum 4 GB RAM, maximum 32+ GB required. It all depends on the size of the reference sequences, their length, and number of query sequences…

Examples of using classify-sklearn are shown in the feature classifier tutorial and in the moving pictures tutorial. The taxonomy flowchart should make the other classifier methods reasonably clear.

All classifiers produce a FeatureData[Taxonomy] artifact containing a list of taxonomy classifications for each query sequence.

Note

Want to see which sequences and taxonomic assignments are associated with each feature ID? Use qiime metadata tabulate with your FeatureData[Taxonomy] and FeatureData[Sequence] artifacts as input.

Now that we have classified our sequences¶

Taxonomic classification opens us up to a whole new world of possibilities. 🌎

Here are the main actions that are enabled by having a FeatureData[Taxonomy] artifact:

Collapse your feature table with

taxa collapse! This merges all features that share the same taxonomic assignment into a single feature. That taxonomic assignment becomes the feature ID in the new feature table. This feature table can be used in all the same ways as the original. Some users may be specifically interested in performing, e.g., taxonomy-informed diversity analyses, but at the very least anyone assigning taxonomy is probably interested in testing differential abundance of those taxa. Comparing differential abundance analyses using taxa as features vs. using ASVs or OTUs as features can be diagnostic and informative for various analyses. 🌂Plot your taxonomic composition to see the abundance of various taxa in each of your samples. Check out

taxa barplotandfeature-table heatmapfor more details. 📊Filter your feature table and representative sequences (

FeatureData[Sequence]artifact) to remove certain taxonomic groups. This is useful for removing known contaminants or non-target groups, e.g., host DNA including mitochondrial or chloroplast sequences. It can also be useful for focusing on specific groups for deeper analysis. See the filtering tutorial for more details and examples. 🌿🐀

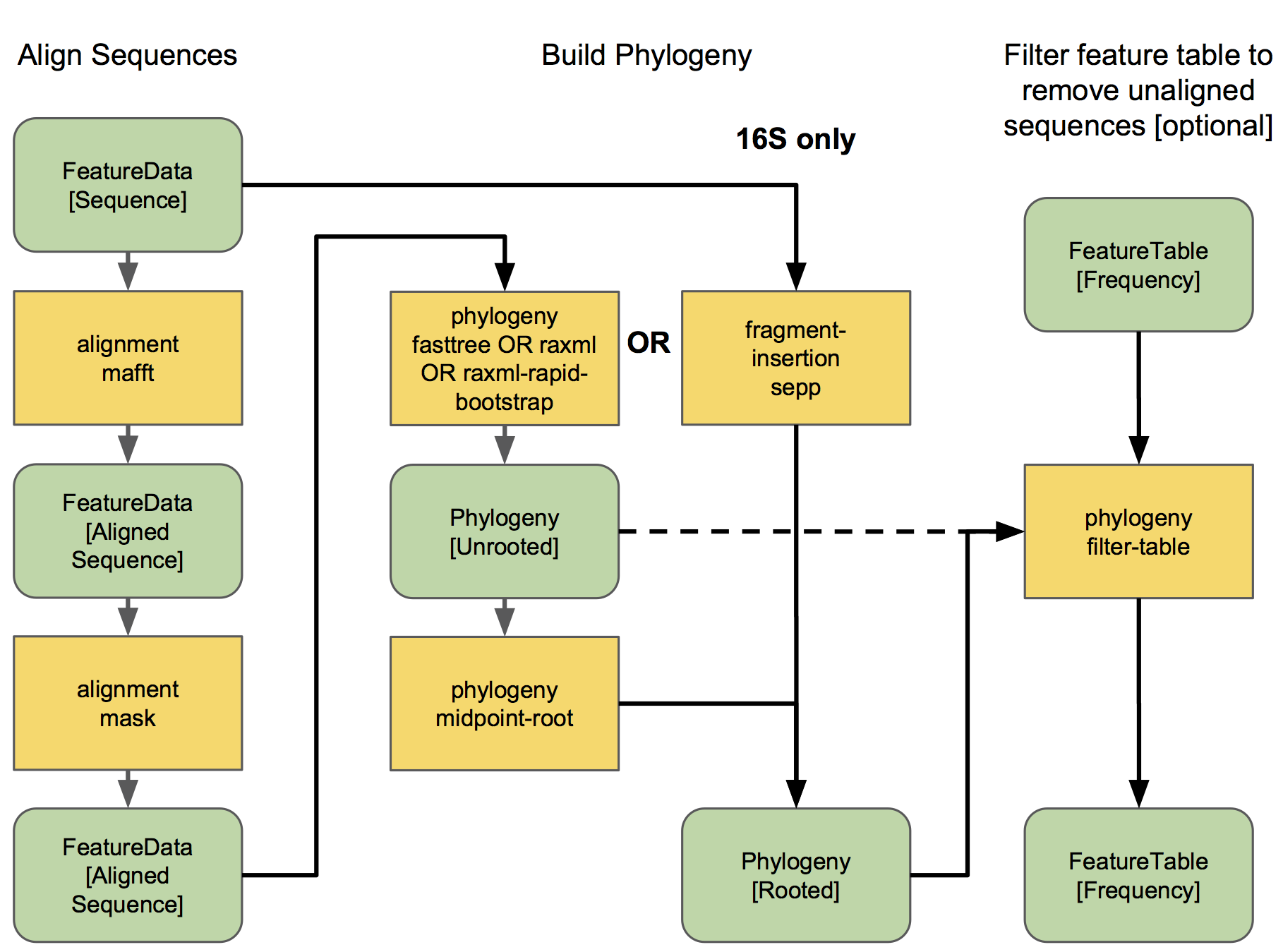

Sequence alignment and phylogeny building¶

Many diversity analyses rely on the phylogenetic similarity between individual features. If you are sequencing phylogenetic markers (e.g., 16S rRNA genes), you can align these sequences to assess the phylogenetic relationship between each of your features. This phylogeny can then be used by other downstream analyses, such as UniFrac distance analyses.

The different options for aligning sequences and producing a phylogeny are shown in the flowchart below. For detailed description of alignment/phylogeny building, see the q2-phylogeny tutorial and the q2-fragment-insertion tutorial. 🌳

Now that we have our Phylogeny[Rooted] artifact, pay attention to where it is used below. 👀

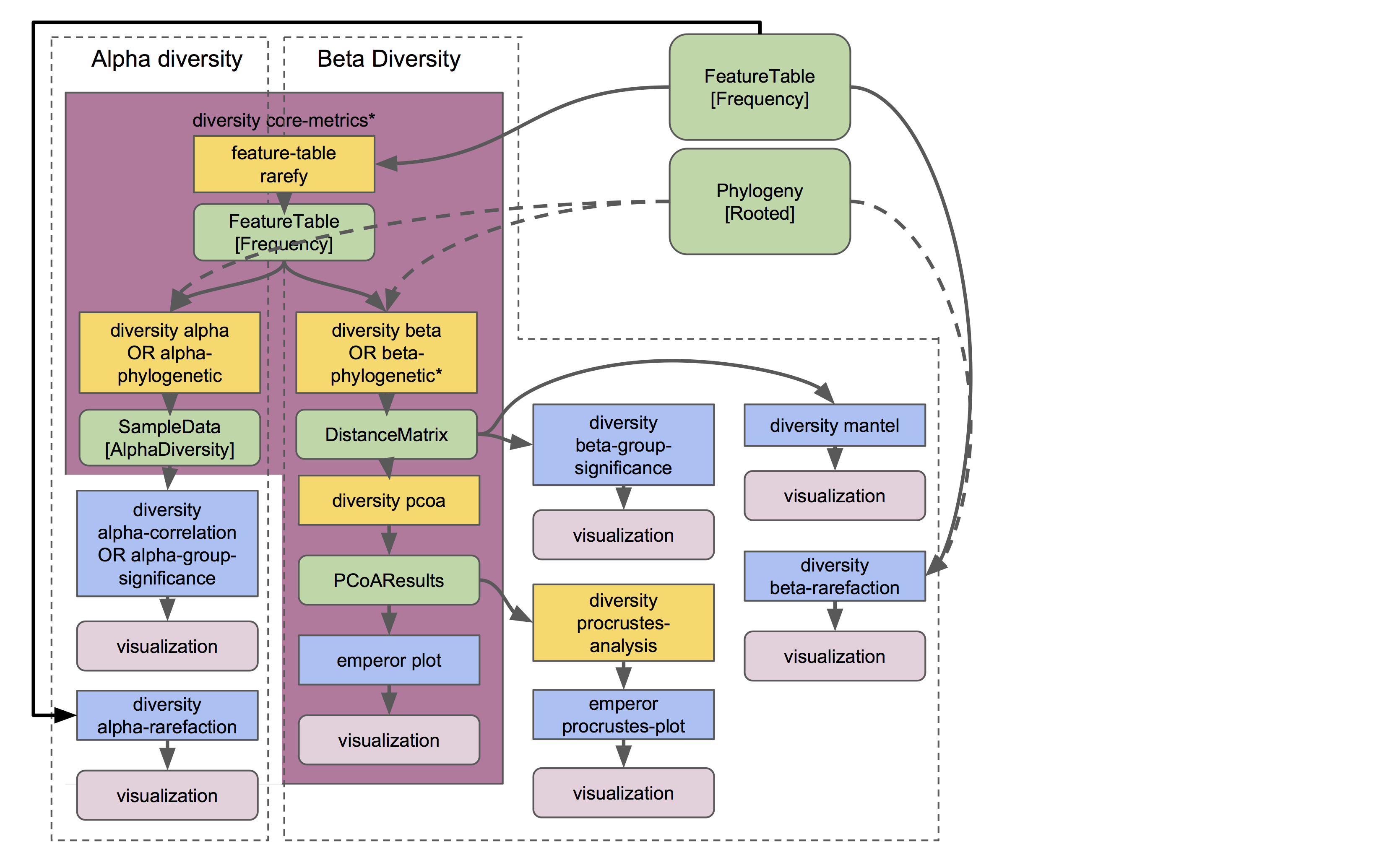

Diversity analysis¶

In microbiome experiments, investigators frequently wonder about things like:

How many different species/OTUs/ASVs are present in my samples?

How much phylogenetic diversity is present in each sample?

How similar/different are individual samples and groups of samples?

What factors (e.g., pH, elevation, blood pressure, body site, or host species just to name a few examples) are associated with differences in microbial composition and biodiversity?

And more. These questions can be answered by alpha- and beta-diversity analyses. Alpha diversity measures the level of diversity within individual samples. Beta diversity measures the level of diversity or dissimilarity between samples. We can then use this information to statistically test whether alpha diversity is different between groups of samples (indicating, e.g., that those groups have more/less species richness) and whether beta diversity is greater between groups (indicating, e.g., that samples within a group are more similar to each other than those in another group, suggesting that membership within these groups is shaping the microbial composition of those samples).

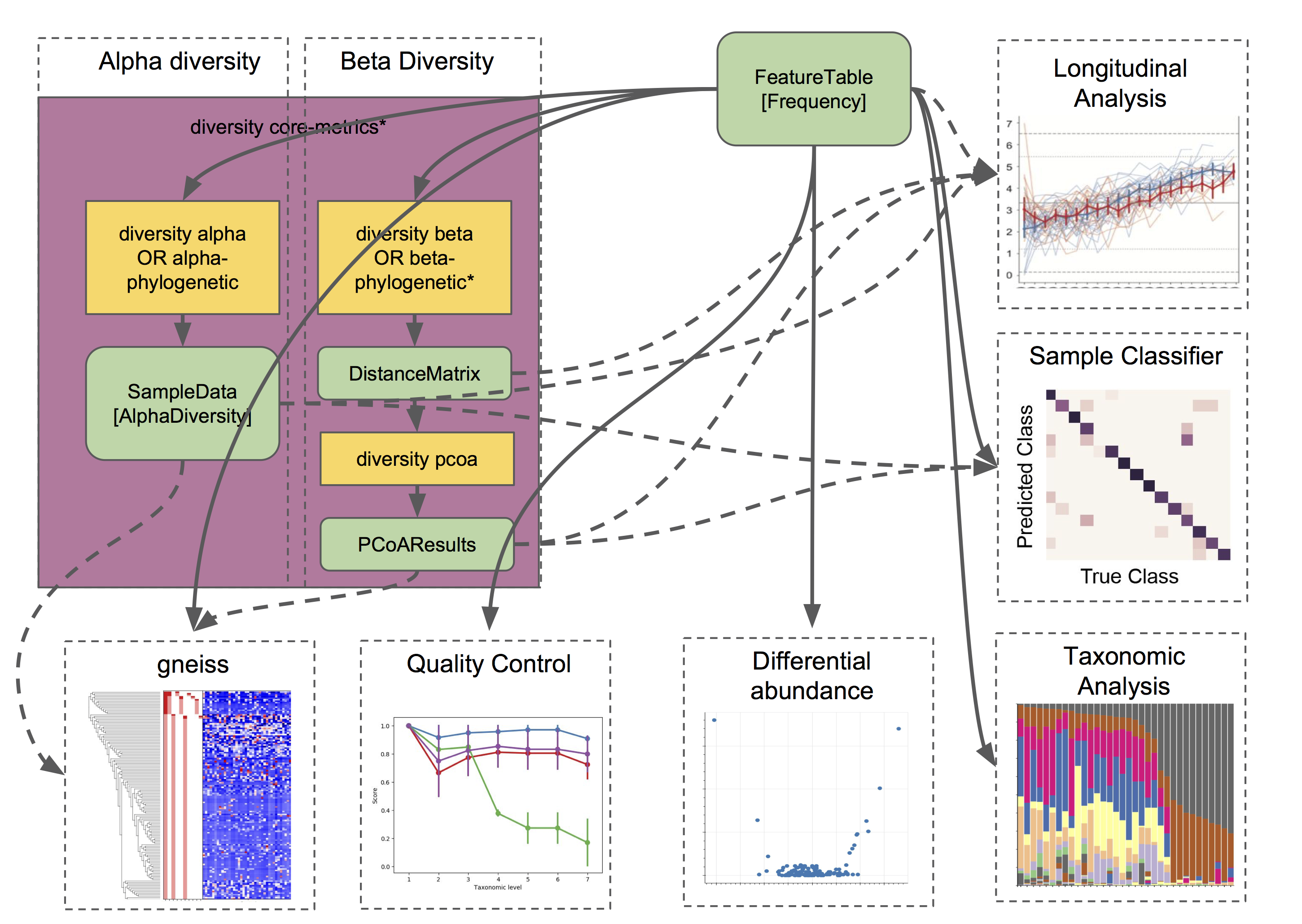

Different types of diversity analyses in QIIME 2 are exemplified in the the moving pictures tutorial and fecal microbiome transplant tutorial, and the full suite of analyses used to generate diversity artifacts are shown here (and that’s not all: note that other plugins can operate on these artifacts, as described further in this guide):

The q2-diversity plugin contains many different useful actions! Check them out to learn more. As you can see in the flowchart, the diversity core-metrics* pipelines (core-metrics and core-metrics-phylogenetic) encompass many different core diversity commands, and in the process produce the main diversity-related artifacts that can be used in downstream analyses. These are:

SampleData[AlphaDiversity]artifacts, which contain alpha diversity estimates for each sample in your feature table. This is the chief artifact for alpha diversity analyses.DistanceMatrixartifacts, containing the pairwise distance/dissimilarity between each pair of samples in your feature table. This is the chief artifact for beta diversity analyses.PCoAResultsartifacts, containing principal coordinates ordination results for each distance/dissimilarity metric. Principal coordinates analysis is a dimension reduction technique, facilitating visual comparisons of sample (dis)simmilarities in 2D or 3D space.

These are the main diversity-related artifacts. Keep them safe! We can re-use these data in all sorts of downstream analyses, or in the various actions of q2-diversity shown in the flowchart. Most of these actions are demonstrated in the moving pictures tutorial so head on over there to learn more! ☔

Note that there are many, many different alpha- and beta-diversity metrics that are available in QIIME 2. To learn more (and figure out whose paper you should be citing!), check out that neat resource, which was contributed by a friendly QIIME 2 user to enlighten all of us. Thanks Stephanie! 😁🙏😁🙏😁🙏

Fun with feature tables¶

At this point you have a feature table, taxonomy classification results, alpha diversity, and beta diversity results. Oh my! 🤓

Taxonomic and diversity analyses, as described above, are the basic types of analyses that most QIIME 2 users are probably going to need to perform at some point. However, this is only the beginning, and there are so many more advanced analyses at our fingertips. 🖐️⌨️

We are only going to give a brief overview, since each of these analyses has its own in-depth tutorial to guide us:

Analyze longitudinal data: q2-longitudinal is a plugin for performing statistical analyses of longitudinal experiments, i.e., where samples are collected from individual patients/subjects/sites repeatedly over time. This includes longitudinal studies of alpha and beta diversity, and some really awesome, interactive plots. 📈🍝

- Predict the future (or the past) 🔮: q2-sample-classifier is a plugin for machine-learning 🤖 analyses of feature data. Both classification and regression models are supported. This allows you to do things like:

predict sample metadata as a function of feature data (e.g., can we use a fecal sample to predict cancer susceptibility? Or predict wine quality based on the microbial composition of grapes before fermentation?). 🍇

identify features that are predictive of different sample characteristics. 🚀

quantify rates of microbial maturation (e.g., to track normal microbiome development in the infant gut and the impacts of persistent malnutrition or antibiotics, diet, and delivery mode). 👶

predict outliers and mislabeled samples. 👹

Differential abundance is used to determine which features are significantly more/less abundant in different groups of samples. QIIME 2 currently supports a few different approaches to differential abundance testing, including ancom and

ancom-bc(actions inq2-composition). 👾👾👾- Evaluate and control data quality: q2-quality-control is a plugin for evaluating and controlling sequence data quality. This includes actions that:

test the accuracy of different bioinformatic or molecular methods, or of run-to-run quality variation. These actions are typically used if users have samples with known compositions, e.g., mock communities, since accuracy is calculated as the similarity between the observed and expected compositions, sequences, etc. But more creative uses may be possible… 🐢

filter sequences based on alignment to a reference database, or that contain specific short sections of DNA (e.g., primer sequences). This is useful for removing sequences that match a specific group of organisms, non-target DNA, or other nonsense. 🙃

And that’s just a brief overview! QIIME 2 continues to grow, so stay tuned for more plugins in future releases 📻, and keep your eyes peeled for third-party plugins that will continue to expand the functionality availability in QIIME 2. 👀

Now go forth an have fun! 💃